ATR System

Detect and Classify Mine-Like Objects

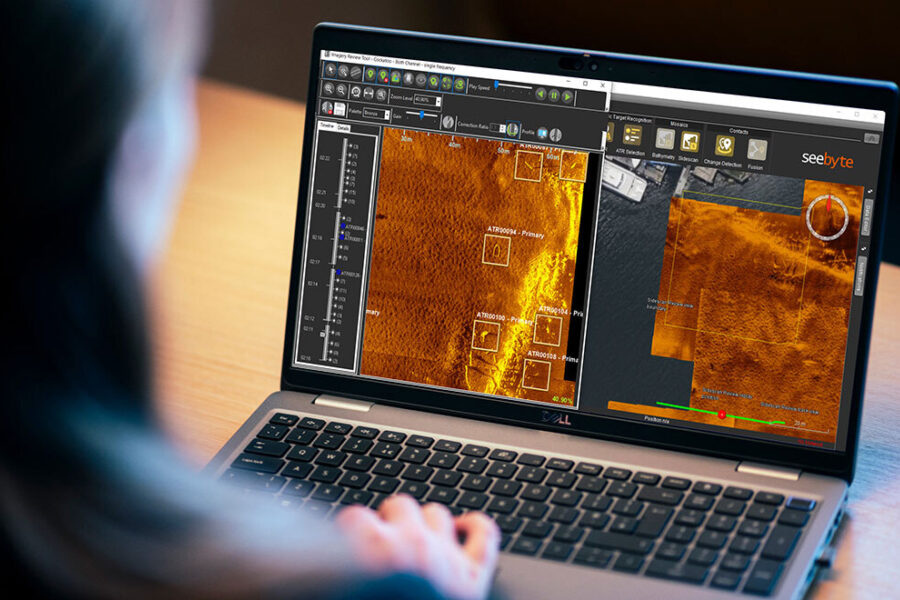

Automatic Target Recognition (ATR) is the tool of choice for analysing side scan data from autonomous maritime systems. Designed as an assist tool, the ATR provides a post mission analysis (PMA) workflow with robust, reliable results, regardless of the data volume. We have worked with the US, UK, Dutch, Belgian, Australian and New Zealand Navies to provide them with our ATR system.

ATR System

Our ATR system allows users to run detection algorithms on their datasets, saving time, reducing workload and repetitive tasks. We train algorithms with data provided to us by our customers. Alternatively our Software Development Kit (SDK) allows integration of third-party algorithms.

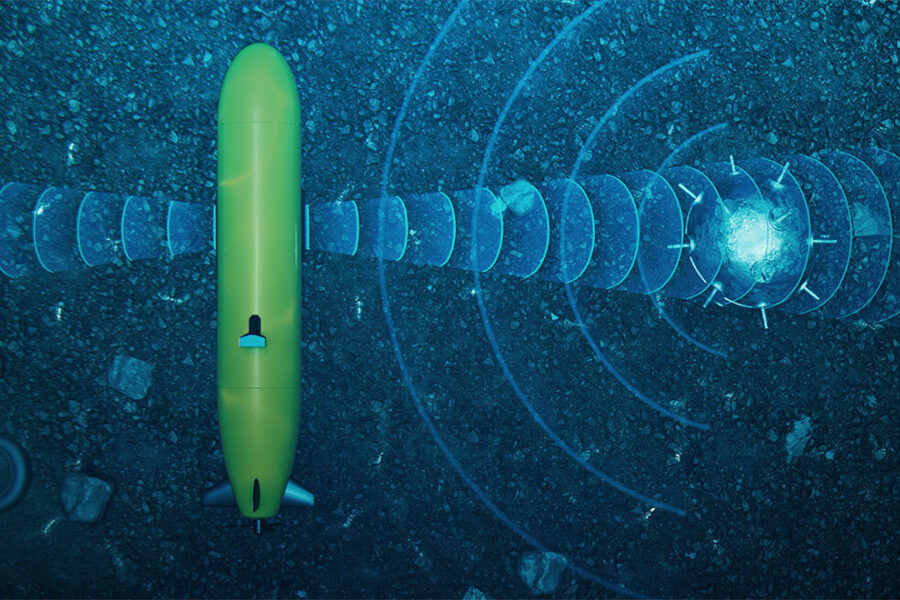

Embedded ATR

Our embedded ATR runs in real-time on the vehicle. This enables our Neptune autonomy system to exploit and disseminate all contacts in-mission. Neptune can then task vehicles in the squad to perform dynamic reacquire and inspection tasks.

Related products

-

SeeTrack

-

SeeTrack is our internationally proven, multi-domain, command and control system for single or multi-vehicle operations

-

Software Development Kit

-

Our Software Development Kit (SDK) allows integration of third-party ATR algorithms or Fusion algorithms.

-

Neptune

-

Neptune is an intelligent autonomy system providing mission level and collaborative autonomy for complex, multi-vehicle operations.

ATR FAQs

Minimum System Requirements

ATR requires SeeTrack (PMA)

Processor: Intel Core i5 (Core i7)

NVidia Graphics Card with at least 8GB RAM, and Compute Capability 9.0 (or less)

No. The ATR needs to be tuned per shape. A shape refers to a single size of a geometric object. For example a 15cm cylinder is one shape, and a 30cm cylinder is a second shape.

This depends on the geometry of the shape and how many false alarms you are happy to accept. It can take two to three thousand views of a target to get a well-tuned algorithm.

The ATR will only work on the sonar it is tuned for and at the same configuration (range, altitude, etc)

Different sizes of targets will need separate tuning.

This is not possible currently. Machine learning algorithms require specialist software and vast computing resources to train. This can only be done at SeeByte’s office in Edinburgh, UK.

Yes you can. The ATR system comes with a Software Development Kit that enables you to add your own algorithm. You then do not need to use SeeByte’s algorithm.